Hudi will be taking on promise for it bundles to stay compatible with Spark minor versions (for ex 2.4, 3.1, 3.2): meaning that single build of Hudi (for ex "hudi-spark3.2-bundle") will be compatible with ALL patch versions in that minor branch (in that case 3.2.1, 3.2.0, etc) To achieve that we'll have to remove (and ban) "spark-avro" as a dependency, which on a few occasions was the root-cause of incompatibility b/w consecutive Spark patch versions (most recently 3.2.1 and 3.2.0, due to this PR). Instead of bundling "spark-avro" as dependency, we will be copying over some of the classes Hudi depends on and maintain them along the Hudi code-base to make sure we're able to provide for the aforementioned guarantee. To workaround arising compatibility issues we will be applying local patches to guarantee compatibility of Hudi bundles w/in the Spark minor version branches. Following Hudi modules to Spark minor branches is currently maintained: "hudi-spark3" -> 3.2.x "hudi-spark3.1.x" -> 3.1.x "hudi-spark2" -> 2.4.x Following classes hierarchies (borrowed from "spark-avro") are maintained w/in these Spark-specific modules to guarantee compatibility with respective minor version branches: AvroSerializer AvroDeserializer AvroUtils Each of these classes has been correspondingly copied from Spark 3.2.1 (for 3.2.x branch), 3.1.2 (for 3.1.x branch), 2.4.4 (for 2.4.x branch) into their respective modules. SchemaConverters class in turn is shared across all those modules given its relative stability (there're only cosmetical changes from 2.4.4 to 3.2.1). All of the aforementioned classes have their corresponding scope of visibility limited to corresponding packages (org.apache.spark.sql.avro, org.apache.spark.sql) to make sure broader code-base does not become dependent on them and instead relies on facades abstracting them. Additionally, given that Hudi plans on supporting all the patch versions of Spark w/in aforementioned minor versions branches of Spark, additional build steps were added to validate that Hudi could be properly compiled against those versions. Testing, however, is performed against the most recent patch versions of Spark with the help of Azure CI. Brief change log: - Removing spark-avro bundling from Hudi by default - Scaffolded Spark 3.2.x hierarchy - Bootstrapped Spark 3.1.x Avro serializer/deserializer hierarchy - Bootstrapped Spark 2.4.x Avro serializer/deserializer hierarchy - Moved ExpressionCodeGen,ExpressionPayload into hudi-spark module - Fixed AvroDeserializer to stay compatible w/ both Spark 3.2.1 and 3.2.0 - Modified bot.yml to build full matrix of support Spark versions - Removed "spark-avro" dependency from all modules - Fixed relocation of spark-avro classes in bundles to assist in running integ-tests.

Docker Demo for Hudi

This repo contains the docker demo resources for building docker demo images, set up the demo, and running Hudi in the docker demo environment.

Repo Organization

Configs for assembling docker images - /hoodie

The /hoodie folder contains all the configs for assembling necessary docker images. The name and repository of each

docker image, e.g., apachehudi/hudi-hadoop_2.8.4-trinobase_368, is defined in the maven configuration file pom.xml.

Docker compose config for the Demo - /compose

The /compose folder contains the yaml file to compose the Docker environment for running Hudi Demo.

Resources and Sample Data for the Demo - /demo

The /demo folder contains useful resources and sample data use for the Demo.

Build and Test Image locally

To build all docker images locally, you can run the script:

./build_local_docker_images.sh

To build a single image target, you can run

mvn clean pre-integration-test -DskipTests -Ddocker.compose.skip=true -Ddocker.build.skip=false -pl :<image_target> -am

# For example, to build hudi-hadoop-trinobase-docker

mvn clean pre-integration-test -DskipTests -Ddocker.compose.skip=true -Ddocker.build.skip=false -pl :hudi-hadoop-trinobase-docker -am

Alternatively, you can use docker cli directly under hoodie/hadoop. Note that, you need to manually name your local

image by using -t option to match the naming in the pom.xml, so that you can update the corresponding image

repository in Docker Hub (detailed steps in the next section).

# Run under hoodie/hadoop, the <tag> is optional, "latest" by default

docker build <image_folder_name> -t <hub-user>/<repo-name>[:<tag>]

# For example, to build trinobase

docker build trinobase -t apachehudi/hudi-hadoop_2.8.4-trinobase_368

After new images are built, you can run the following script to bring up docker demo with your local images:

./setup_demo.sh dev

Upload Updated Image to Repository on Docker Hub

Once you have built the updated image locally, you can push the corresponding this repository of the image to the Docker Hud registry designated by its name or tag:

docker push <hub-user>/<repo-name>:<tag>

# For example

docker push apachehudi/hudi-hadoop_2.8.4-trinobase_368

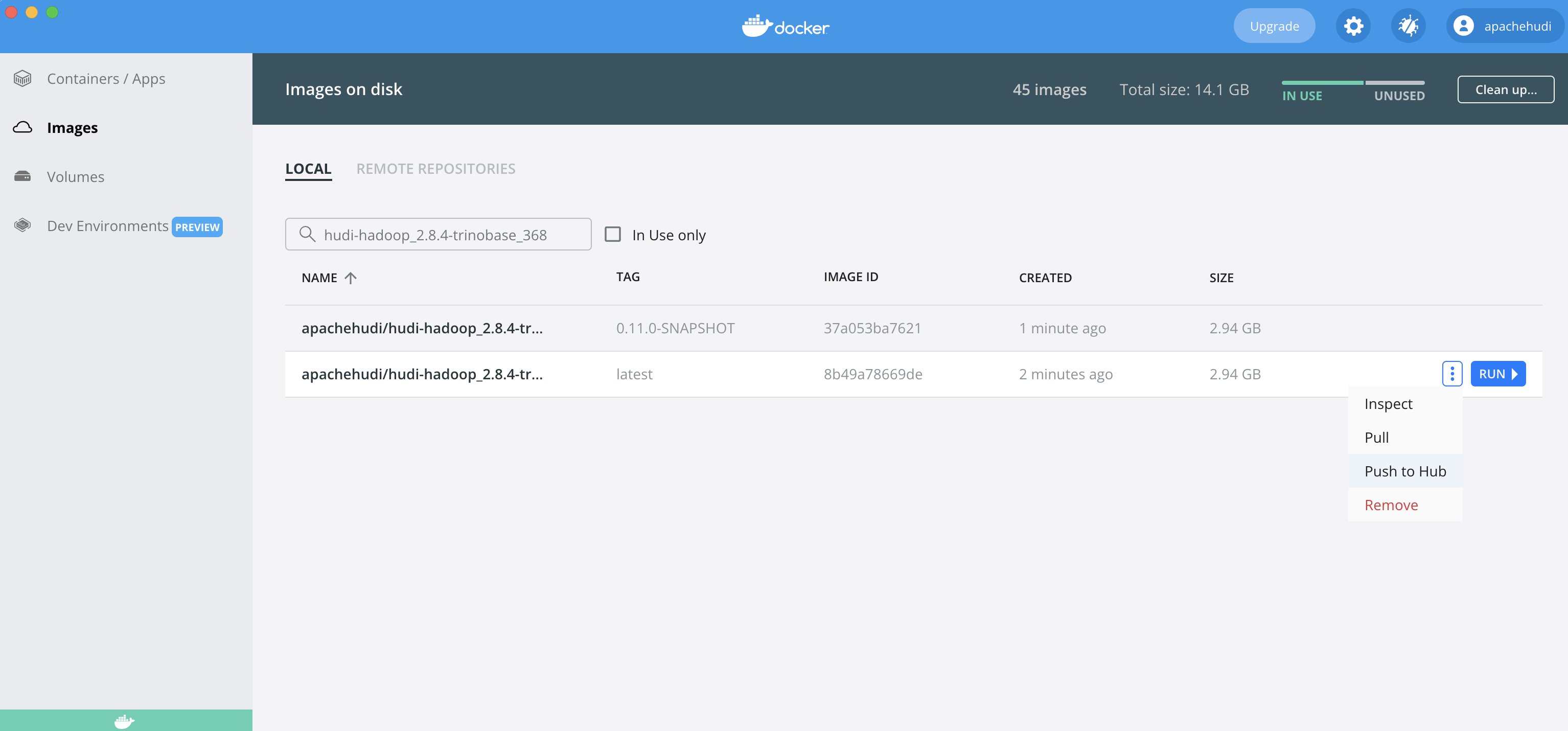

You can also easily push the image to the Docker Hub using Docker Desktop app: go to Images, search for the image by

the name, and then click on the three dots and Push to Hub.

Note that you need to ask for permission to upload the Hudi Docker Demo images to the repositories.

You can find more information on Docker Hub Repositories Manual.

Docker Demo Setup

Please refer to the Docker Demo Docs page.

Building Multi-Arch Images

NOTE: The steps below require some code changes. Support for multi-arch builds in a fully automated manner is being tracked by HUDI-3601.

By default, the docker images are built for x86_64 (amd64) architecture. Docker buildx allows you to build multi-arch

images, link them together with a manifest file, and push them all to a registry – with a single command. Let's say we

want to build for arm64 architecture. First we need to ensure that buildx setup is done locally. Please follow the

below steps (referred from https://www.docker.com/blog/multi-arch-images):

# List builders

~ ❯❯❯ docker buildx ls

NAME/NODE DRIVER/ENDPOINT STATUS PLATFORMS

default * docker

default default running linux/amd64, linux/arm64, linux/arm/v7, linux/arm/v6

# If you are using the default builder, which is basically the old builder, then do following

~ ❯❯❯ docker buildx create --name mybuilder

mybuilder

~ ❯❯❯ docker buildx use mybuilder

~ ❯❯❯ docker buildx inspect --bootstrap

[+] Building 2.5s (1/1) FINISHED

=> [internal] booting buildkit 2.5s

=> => pulling image moby/buildkit:master 1.3s

=> => creating container buildx_buildkit_mybuilder0 1.2s

Name: mybuilder

Driver: docker-container

Nodes:

Name: mybuilder0

Endpoint: unix:///var/run/docker.sock

Status: running

Platforms: linux/amd64, linux/arm64, linux/arm/v7, linux/arm/v6

Now goto <HUDI_REPO_DIR>/docker/hoodie/hadoop and change the Dockerfile to pull dependent images corresponding to

arm64. For example, in base/Dockerfile (which pulls jdk8 image), change the

line FROM openjdk:8u212-jdk-slim-stretch to FROM arm64v8/openjdk:8u212-jdk-slim-stretch.

Then, from under <HUDI_REPO_DIR>/docker/hoodie/hadoop directory, execute the following command to build as well as

push the image to the dockerhub repo:

# Run under hoodie/hadoop, the <tag> is optional, "latest" by default

docker buildx build <image_folder_name> --platform <comma-separated,platforms> -t <hub-user>/<repo-name>[:<tag>] --push

# For example, to build base image

docker buildx build base --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-base:linux-arm64-0.10.1 --push

Once the base image is pushed then you could do something similar for other images. Change hive dockerfile to pull the base image with tag corresponding to linux/arm64 platform.

# Change below line in the Dockerfile

FROM apachehudi/hudi-hadoop_${HADOOP_VERSION}-base:latest

# as shown below

FROM --platform=linux/arm64 apachehudi/hudi-hadoop_${HADOOP_VERSION}-base:linux-arm64-0.10.1

# and then build & push from under hoodie/hadoop dir

docker buildx build hive_base --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-hive_2.3.3:linux-arm64-0.10.1 --push

Similarly, for images that are dependent on hive (e.g. base spark

, sparkmaster, sparkworker

and sparkadhoc), change the corresponding Dockerfile to pull the base hive

image with tag corresponding to arm64. Then build and push using docker buildx command.

For the sake of completeness, here is a patch which

shows what changes to make in Dockerfiles (assuming tag is named linux-arm64-0.10.1), and below is the list

of docker buildx commands.

docker buildx build base --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-base:linux-arm64-0.10.1 --push

docker buildx build datanode --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-datanode:linux-arm64-0.10.1 --push

docker buildx build historyserver --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-history:linux-arm64-0.10.1 --push

docker buildx build hive_base --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-hive_2.3.3:linux-arm64-0.10.1 --push

docker buildx build namenode --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-namenode:linux-arm64-0.10.1 --push

docker buildx build prestobase --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-prestobase_0.217:linux-arm64-0.10.1 --push

docker buildx build spark_base --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-hive_2.3.3-sparkbase_2.4.4:linux-arm64-0.10.1 --push

docker buildx build sparkadhoc --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-hive_2.3.3-sparkadhoc_2.4.4:linux-arm64-0.10.1 --push

docker buildx build sparkmaster --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-hive_2.3.3-sparkmaster_2.4.4:linux-arm64-0.10.1 --push

docker buildx build sparkworker --platform linux/arm64 -t apachehudi/hudi-hadoop_2.8.4-hive_2.3.3-sparkworker_2.4.4:linux-arm64-0.10.1 --push

Once all the required images are pushed to the dockerhub repos, then we need to do one additional change in docker compose file. Apply this patch to the docker compose file so that setup_demo pulls images with the correct tag for arm64. And now we should be ready to run the setup script and follow the docker demo.